Edge computing is reshaping how we collect, analyze, and act on data by moving processing closer to the source—sensors, devices, and user endpoints—to unlock speed and context. This proximity reduces data travel, enabling real-time decision-making, lowering latency, and delivering the edge computing benefits that organizations prize for faster apps and smarter operations. From smart home gadgets to industrial sensors, the approach keeps critical insights near the edge, where edge devices can respond quickly and autonomously. In business settings, teams blend local analysis with cloud resources to balance speed, privacy, and scalability. As a foundational layer in modern IT, edge-enabled architectures are redefining how products, services, and systems interact in the era of connected everything.

A parallel way to describe this trend is to view it as on-site processing that places computation near data sources. Near-data processing, distributed analytics at the network edge, and fog-inspired approaches share the goal of reducing latency and keeping sensitive information close to devices. By assigning initial analysis and decisions to local devices, organizations can free centralized systems for deeper insights and broader coordination. This perspective complements cloud-centric designs, enabling resilient, scalable architectures that support real-time applications in manufacturing, healthcare, logistics, and consumer tech. In practice, teams deploy edge devices, gateways, and micro data centers to orchestrate these capabilities with secure, low-bandwidth communication back to core platforms.

1) What Is Edge Computing? Proximity-Powered Processing for Real-Time Decision-Making

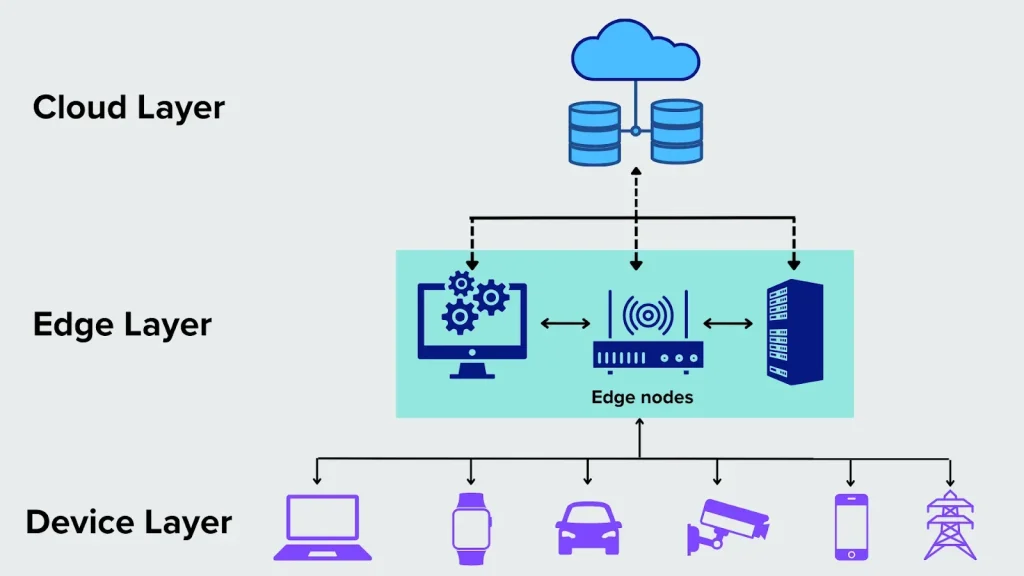

Edge computing shifts data processing from distant data centers to devices and micro data centers near the source of data generation. By processing sensor streams, camera feeds, and user interactions close to where they’re produced, it minimizes the distance data must travel. The result is lower latency, faster feedback loops, and the ability to act on insights in near real time, which is essential for responsive applications in manufacturing, smart cities, and consumer devices.

This proximity-based approach also reduces backhaul bandwidth needs and can improve resilience when connectivity is intermittent. With edge computing, organizations can deploy focused analytics, lightweight AI inference, and immediate control decisions at the edge, while more complex processing and long-term storage migrate to centralized clouds when appropriate. In practice, you’ll see edge devices and on-site servers handling initial computation to accelerate user experiences and operational workflows.

2) Edge Computing vs Cloud: Balancing Speed, Cost, and Scale

Understanding the edge-cloud relationship is key to modern architectures. The cloud offers vast scalability, centralized management, and heavy-duty analytics, but it can fall short on latency and offline reliability for time-sensitive tasks. Edge computing brings computation closer to data sources to deliver instant decisions, which complements cloud capabilities in a hybrid model.

A thoughtful edge computing strategy uses the edge for real-time processing and privacy-sensitive tasks, while the cloud handles deep analytics, archival storage, and complex model training. This edge computing vs cloud balance supports an architecture that optimizes speed, control, and cost, enabling a flexible edge-cloud continuum across industries.

3) IoT Edge Computing: Bringing Intelligence to the Data Source

IoT edge computing places analytics, models, and inference engines directly on or near sensors, cameras, and actuators. When devices can process their own data locally, they can identify events, detect anomalies, and trigger immediate actions without waiting for a centralized response. This capability reduces data volumes sent to the cloud and enhances privacy by keeping sensitive information on-site.

As IoT edge computing evolves, edge devices such as smart cameras, environmental sensors, and industrial controllers become intelligent partners in operations. Local inference enables real-time answers—from alerting maintenance teams to adjusting manufacturing lines—while preserving bandwidth and meeting regulatory privacy requirements.

4) Edge Devices and Infrastructure: Building Blocks for Local Processing

Edge devices, ranging from compact sensors to rugged industrial gateways, form the hardware backbone of local processing ecosystems. Complemented by micro data centers and distributed edge nodes, these components bring compute, memory, and storage nearer to the data source. The result is faster turnaround, localized analytics, and the ability to operate in environments with variable connectivity.

Managing edge infrastructure requires lightweight software stacks that can run containers, orchestration tools, and efficient update mechanisms on devices with constrained resources. Practical edge deployments emphasize maintainability, power and cooling considerations, and remote management so software updates, monitoring, and policy enforcement happen without manual on-site intervention.

5) Real-World Benefits and Use Cases of Edge Computing

The core benefits—edge computing benefits—are visible across industries: lower latency enabling real-time control, bandwidth savings from local pre-processing, and enhanced privacy by keeping sensitive data closer to its source. These advantages translate into tangible improvements in manufacturing throughput, safer smart city operations, and more responsive consumer devices.

In practice, use cases range from predictive maintenance in factories and real-time quality monitoring to on-site imaging analysis in healthcare and AI-powered recommendations at the edge in retail. Across these scenarios, edge computing advantages include faster decision cycles, reduced cloud dependency, offline capability, and greater resilience in environments with spotty connectivity.

6) Security, Privacy, and Governance at the Edge

Security at the edge must address a broader attack surface: device integrity, secure boot processes, encrypted communications, and robust identity management. A comprehensive strategy pairs strong device security with network segmentation and continuous monitoring to protect data as it moves from edge to cloud. Governance policies clarify what data stays at the edge, what is summarized, and what may be transmitted for centralized analytics.

Ongoing risk management—threat modeling, regular audits, and secure software updates—helps maintain trust in edge deployments. When combined with end-to-end security principles that span edge devices, local networks, and cloud services, organizations can realize reliable privacy and compliance while enabling fast, edge-driven decision-making.

Frequently Asked Questions

What are the key edge computing benefits for real-time applications?

The edge computing benefits include lower latency, bandwidth savings, improved privacy, and resilience when connectivity to the cloud is intermittent, enabling faster decisions right at the data source.

What are the main edge computing advantages over traditional cloud-based architectures?

The edge computing advantages include real-time processing, reduced backhaul traffic, offline capability, and localized decision-making, while the cloud remains strong for large-scale analytics and long-term storage.

Edge computing vs cloud: how do they complement each other?

Many organizations use a hybrid approach where edge computing vs cloud balances speed and scale: edge handles fast, on-site processing for immediate actions, while cloud handles deep analytics and comprehensive data storage.

What is IoT edge computing and how does it relate to edge devices?

IoT edge computing describes processing data on devices near data generation, using edge devices such as gateways, cameras, and embedded boards to run analytics on-site for faster responses and improved privacy.

What are common edge devices used in edge computing deployments?

Common edge devices include rugged edge gateways, micro data centers, and sensor-equipped devices that perform local processing at the network edge to reduce latency and backhaul needs.

What security considerations are essential for IoT edge computing and edge devices?

Security considerations include secure boot, encryption, identity and access management, regular software updates, and network segmentation to protect IoT edge computing and edge devices from threats.

| Aspect | Key Points | Notes/Examples |

|---|---|---|

| What is Edge Computing? | Processing data near the source (edge) instead of sending everything to a centralized cloud. This can involve local micro data centers, edge servers, or on-device compute. Benefits include lower latency, faster decision-making, reduced backhaul traffic, and greater resilience. |

Examples: smart cameras on-site, industrial machines monitoring in real time, autonomous vehicles processing sensor data locally. |

| Why Edge Computing Is Rising Now | Driven by data volume, faster networks (5G and beyond), and AI models that run efficiently on modest hardware. | Why it matters: local processing enables privacy-conscious, responsive systems and reduces network burden. |

| Benefits & Use Cases | Lower latency; bandwidth savings; improved privacy and data sovereignty; reliability and resilience; personalization and offline capability. | Use cases span manufacturing (Industry 4.0), smart cities/transportation, healthcare, retail/consumer IoT, and edge-based AI/ML inference. |

| Edge vs Cloud: The Difference | Cloud offers scalability and centralized management for analytics and large workloads. Edge brings compute closer to data sources for low latency and privacy. A hybrid edge-cloud continuum is common. | Hybrid approaches balance speed, cost, and intelligence; design around data governance, orchestration, and security. |

| Security, Privacy & Governance | Edge expands the attack surface but can reduce data movement. Requires device security, secure boot, encryption, access controls, and network segmentation. | Data governance defines what stays on the edge vs. what is sent to the cloud; regular audits and secure updates are essential. |

| Implementation & Best Practices | Start with a clear use case; choose the right edge architecture; use lightweight software stacks; plan data governance; prioritize security; ensure observability and remote management. | Observability and management reduce operational complexity as edge deployments scale. |

| Industry Examples & Road Ahead | Manufacturing plants with edge monitoring, logistics route optimization at edge centers, on-site healthcare data processing, and AI-enabled consumer devices. | Future trends include deeper AI at the edge, autonomous services, and resilient networks forming an integrated edge-cloud ecosystem. |

Summary

Edge computing is transforming how technologies process data by moving compute closer to where data is generated, enabling real-time insights and reducing latency. This shift supports faster decision-making, bandwidth efficiency, and improved privacy while complementing centralized cloud capabilities. A hybrid edge-cloud approach often yields the best balance of responsiveness and analytical depth, particularly in manufacturing, smart cities, healthcare, and consumer technology. As devices proliferate and AI moves toward the edge, governance, security, and scalable management become essential to realizing resilient, intelligent systems.